Upload README.md

Browse files

README.md

ADDED

|

@@ -0,0 +1,120 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

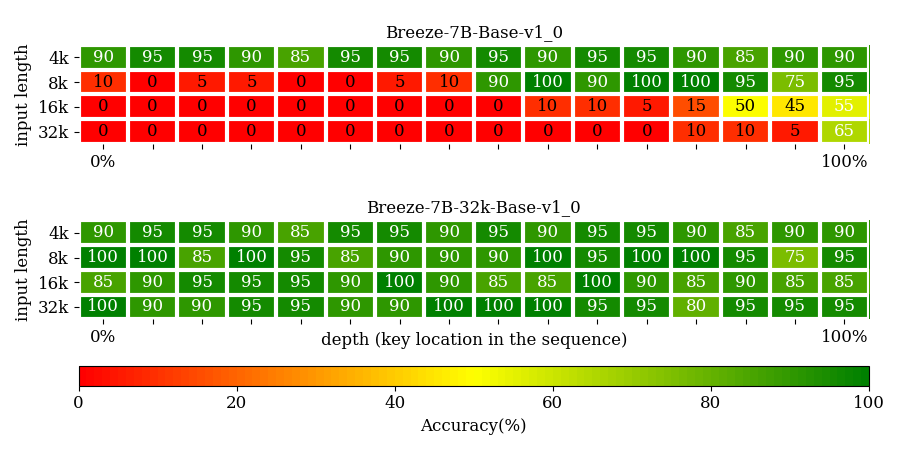

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

pipeline_tag: text-generation

|

| 3 |

+

license: apache-2.0

|

| 4 |

+

language:

|

| 5 |

+

- zh

|

| 6 |

+

- en

|

| 7 |

+

---

|

| 8 |

+

|

| 9 |

+

# Model Card for MediaTek Research Breeze-7B-32k-Base-v1_0

|

| 10 |

+

|

| 11 |

+

MediaTek Research Breeze-7B (hereinafter referred to as Breeze-7B) is a language model family that builds on top of [Mistral-7B](https://huggingface.co/mistralai/Mistral-7B-v0.1), specifically intended for Traditional Chinese use.

|

| 12 |

+

|

| 13 |

+

[Breeze-7B-Base](https://huggingface.co/MediaTek-Research/Breeze-7B-Base-v1_0) is the base model for the Breeze-7B series.

|

| 14 |

+

It is suitable for use if you have substantial fine-tuning data to tune it for your specific use case.

|

| 15 |

+

|

| 16 |

+

[Breeze-7B-Instruct](https://huggingface.co/MediaTek-Research/Breeze-7B-Instruct-v1_0) derives from the base model Breeze-7B-Base, making the resulting model amenable to be used as-is for commonly seen tasks.

|

| 17 |

+

|

| 18 |

+

[Breeze-7B-32k-Base](https://huggingface.co/MediaTek-Research/Breeze-7B-32k-Base-v1_0) is extended from the base model with more data, base change, and the disabling of the sliding window.

|

| 19 |

+

Roughly speaking, that is equivalent to 44k Traditional Chinese characters.

|

| 20 |

+

|

| 21 |

+

[Breeze-7B-32k-Instruct](https://huggingface.co/MediaTek-Research/Breeze-7B-32k-Instruct-v1_0) derives from the base model Breeze-7B-32k-Base, making the resulting model amenable to be used as-is for commonly seen tasks.

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

Practicality-wise:

|

| 26 |

+

- Breeze-7B-Base expands the original vocabulary with additional 30,000 Traditional Chinese tokens. With the expanded vocabulary, everything else being equal, Breeze-7B operates at twice the inference speed for Traditional Chinese to Mistral-7B and Llama 7B. [See [Inference Performance](#inference-performance).]

|

| 27 |

+

- Breeze-7B-Instruct can be used as is for common tasks such as Q&A, RAG, multi-round chat, and summarization.

|

| 28 |

+

- Breeze-7B-32k-Instruct can perform tasks at a document level (For Chinese, 20 ~ 40 pages).

|

| 29 |

+

|

| 30 |

+

*A project by the members (in alphabetical order): Chan-Jan Hsu 許湛然, Feng-Ting Liao 廖峰挺, Po-Chun Hsu 許博竣, Yi-Chang Chen 陳宜昌, and the supervisor Da-Shan Shiu 許大山.*

|

| 31 |

+

|

| 32 |

+

## Features

|

| 33 |

+

|

| 34 |

+

- Breeze-7B-32k-Base-v1_0

|

| 35 |

+

- Expanding the vocabulary dictionary size from 32k to 62k to better support Traditional Chinese

|

| 36 |

+

- 32k-token context length

|

| 37 |

+

|

| 38 |

+

- Breeze-7B-32k-Instruct-v1_0

|

| 39 |

+

- Expanding the vocabulary dictionary size from 32k to 62k to better support Traditional Chinese

|

| 40 |

+

- 32k-token context length

|

| 41 |

+

- Multi-turn dialogue (without special handling for harmfulness)

|

| 42 |

+

|

| 43 |

+

## Model Details

|

| 44 |

+

|

| 45 |

+

- Breeze-7B-32k-Base-v1_0

|

| 46 |

+

- Pretrained from: [Breeze-7B-Base](https://huggingface.co/MediaTek-Research/Breeze-7B-Base-v1_0)

|

| 47 |

+

- Model type: Causal decoder-only transformer language model

|

| 48 |

+

- Language: English and Traditional Chinese (zh-tw)

|

| 49 |

+

- Breeze-7B-32k-Instruct-v1_0

|

| 50 |

+

- Finetuned from: [Breeze-7B-32k-Base](https://huggingface.co/MediaTek-Research/Breeze-7B-32k-Base-v1_0)

|

| 51 |

+

- Model type: Causal decoder-only transformer language model

|

| 52 |

+

- Language: English and Traditional Chinese (zh-tw)

|

| 53 |

+

|

| 54 |

+

## Long-context Performance

|

| 55 |

+

|

| 56 |

+

#### Needle-in-a-haystack Performance

|

| 57 |

+

|

| 58 |

+

We use the passkey retrieval task to test the model's ability to attend to different various depths in a given sequence.

|

| 59 |

+

A key in placed within a long context distracting document for the model to retrieve.

|

| 60 |

+

The key position is binned into 16 bins, and there are 20 testcases for each bin.

|

| 61 |

+

Breeze-7B-32k-Base clears the tasks with 90+% accuracy, shown in the figure below.

|

| 62 |

+

|

| 63 |

+

|

| 64 |

+

#### Long-DRCD Performance

|

| 65 |

+

|

| 66 |

+

| **Model/Performance(EM)** | **DRCD** | **DRCD-16k** | **DRCD-32k** | **DRCD-64k** |

|

| 67 |

+

|---------------------------|----------|--------------|--------------|--------------|

|

| 68 |

+

| **Breeze-7B-32k-Instruct-v1\_0** | | | | |

|

| 69 |

+

| **Breeze-7B-32k-Base-v1\_0** | 79.73 | 69.68 | 61.55 | 25.82 |

|

| 70 |

+

| **Breeze-7B-Base-v1\_0** | 80.61 | 21.79 | 15.29 | 12.63 |

|

| 71 |

+

|

| 72 |

+

#### Short-Benchmark Performance

|

| 73 |

+

|

| 74 |

+

| **Model/Performance(EM)** | **TMMLU+** | **MMLU** | **TABLE** |

|

| 75 |

+

|---------------------------|----------|--------------|--------------|

|

| 76 |

+

| **Breeze-7B-32k-Instruct-v1\_0** | | | | |

|

| 77 |

+

| **Breeze-7B-Instruct-v1\_0** | | | | |

|

| 78 |

+

|

| 79 |

+

## Use in Transformers

|

| 80 |

+

|

| 81 |

+

First install direct dependencies:

|

| 82 |

+

```

|

| 83 |

+

pip install transformers torch accelerate

|

| 84 |

+

```

|

| 85 |

+

If you want faster inference using flash-attention2, you need to install these dependencies:

|

| 86 |

+

```bash

|

| 87 |

+

pip install packaging ninja

|

| 88 |

+

pip install flash-attn

|

| 89 |

+

```

|

| 90 |

+

Then load the model in transformers:

|

| 91 |

+

```python

|

| 92 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 93 |

+

import torch

|

| 94 |

+

|

| 95 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 96 |

+

"MediaTek-Research/Breeze-7B-32k-Base-v1_0",

|

| 97 |

+

device_map="auto",

|

| 98 |

+

torch_dtype=torch.bfloat16,

|

| 99 |

+

attn_implementation="flash_attention_2" # optional but highly recommended

|

| 100 |

+

)

|

| 101 |

+

from transformers import AutoTokenizer

|

| 102 |

+

tokenizer = AutoTokenizer.from_pretrained("MediaTek-Research/Breeze-7B-32k-Base-v1_0")

|

| 103 |

+

tokenizer.tokenize("你好,我可以幫助您解決各種問題、提供資訊和協助您完成許多不同的任務。例如:回答技術問題、提供建議、翻譯文字、尋找資料或協助您安排行程等。請告訴我如何能幫助您。")

|

| 104 |

+

# Tokenized results

|

| 105 |

+

# ['▁', '你好', ',', '我', '可以', '幫助', '您', '解決', '各種', '問題', '、', '提供', '資訊', '和', '協助', '您', '完成', '許多', '不同', '的', '任務', '。', '例如', ':', '回答', '技術', '問題', '、', '提供', '建議', '、', '翻譯', '文字', '、', '尋找', '資料', '或', '協助', '您', '安排', '行程', '等', '。', '請', '告訴', '我', '如何', '能', '幫助', '您', '。']

|

| 106 |

+

```

|

| 107 |

+

|

| 108 |

+

|

| 109 |

+

## Citation

|

| 110 |

+

|

| 111 |

+

```

|

| 112 |

+

@article{MediaTek-Research2024breeze7b,

|

| 113 |

+

title={Breeze-7B Technical Report},

|

| 114 |

+

author={Chan-Jan Hsu and Chang-Le Liu and Feng-Ting Liao and Po-Chun Hsu and Yi-Chang Chen and Da-Shan Shiu},

|

| 115 |

+

year={2024},

|

| 116 |

+

eprint={2403.02712},

|

| 117 |

+

archivePrefix={arXiv},

|

| 118 |

+

primaryClass={cs.CL}

|

| 119 |

+

}

|

| 120 |

+

```

|