Upload folder using huggingface_hub

Browse files- .gitattributes +1 -0

- LCM-LoRA-Technical-Report.pdf +3 -0

- README.md +273 -0

- pytorch_lora_weights.safetensors +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

LCM-LoRA-Technical-Report.pdf filter=lfs diff=lfs merge=lfs -text

|

LCM-LoRA-Technical-Report.pdf

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:23f42605e848334d433996c92a5baa12280b18730e56455f58f85d8f2f28f160

|

| 3 |

+

size 1726518

|

README.md

ADDED

|

@@ -0,0 +1,273 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

library_name: diffusers

|

| 3 |

+

base_model: stabilityai/stable-diffusion-xl-base-1.0

|

| 4 |

+

tags:

|

| 5 |

+

- lora

|

| 6 |

+

- text-to-image

|

| 7 |

+

license: openrail++

|

| 8 |

+

inference: false

|

| 9 |

+

---

|

| 10 |

+

|

| 11 |

+

# Latent Consistency Model (LCM) LoRA: SDXL

|

| 12 |

+

|

| 13 |

+

Latent Consistency Model (LCM) LoRA was proposed in [LCM-LoRA: A universal Stable-Diffusion Acceleration Module](https://arxiv.org/abs/2311.05556)

|

| 14 |

+

by *Simian Luo, Yiqin Tan, Suraj Patil, Daniel Gu et al.*

|

| 15 |

+

|

| 16 |

+

It is a distilled consistency adapter for [`stable-diffusion-xl-base-1.0`](https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0) that allows

|

| 17 |

+

to reduce the number of inference steps to only between **2 - 8 steps**.

|

| 18 |

+

|

| 19 |

+

| Model | Params / M |

|

| 20 |

+

|----------------------------------------------------------------------------|------------|

|

| 21 |

+

| [lcm-lora-sdv1-5](https://huggingface.co/latent-consistency/lcm-lora-sdv1-5) | 67.5 |

|

| 22 |

+

| [lcm-lora-ssd-1b](https://huggingface.co/latent-consistency/lcm-lora-ssd-1b) | 105 |

|

| 23 |

+

| [**lcm-lora-sdxl**](https://huggingface.co/latent-consistency/lcm-lora-sdxl) | **197M** |

|

| 24 |

+

|

| 25 |

+

## Usage

|

| 26 |

+

|

| 27 |

+

LCM-LoRA is supported in 🤗 Hugging Face Diffusers library from version v0.23.0 onwards. To run the model, first

|

| 28 |

+

install the latest version of the Diffusers library as well as `peft`, `accelerate` and `transformers`.

|

| 29 |

+

audio dataset from the Hugging Face Hub:

|

| 30 |

+

|

| 31 |

+

```bash

|

| 32 |

+

pip install --upgrade pip

|

| 33 |

+

pip install --upgrade diffusers transformers accelerate peft

|

| 34 |

+

```

|

| 35 |

+

|

| 36 |

+

***Note: For detailed usage examples we recommend you to check out our official [LCM-LoRA docs](https://huggingface.co/docs/diffusers/main/en/using-diffusers/inference_with_lcm_lora)***

|

| 37 |

+

|

| 38 |

+

### Text-to-Image

|

| 39 |

+

|

| 40 |

+

The adapter can be loaded with it's base model `stabilityai/stable-diffusion-xl-base-1.0`. Next, the scheduler needs to be changed to [`LCMScheduler`](https://huggingface.co/docs/diffusers/v0.22.3/en/api/schedulers/lcm#diffusers.LCMScheduler) and we can reduce the number of inference steps to just 2 to 8 steps.

|

| 41 |

+

Please make sure to either disable `guidance_scale` or use values between 1.0 and 2.0.

|

| 42 |

+

|

| 43 |

+

```python

|

| 44 |

+

import torch

|

| 45 |

+

from diffusers import LCMScheduler, AutoPipelineForText2Image

|

| 46 |

+

|

| 47 |

+

model_id = "stabilityai/stable-diffusion-xl-base-1.0"

|

| 48 |

+

adapter_id = "latent-consistency/lcm-lora-sdxl"

|

| 49 |

+

|

| 50 |

+

pipe = AutoPipelineForText2Image.from_pretrained(model_id, torch_dtype=torch.float16, variant="fp16")

|

| 51 |

+

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

| 52 |

+

pipe.to("cuda")

|

| 53 |

+

|

| 54 |

+

# load and fuse lcm lora

|

| 55 |

+

pipe.load_lora_weights(adapter_id)

|

| 56 |

+

pipe.fuse_lora()

|

| 57 |

+

|

| 58 |

+

prompt = "Self-portrait oil painting, a beautiful cyborg with golden hair, 8k"

|

| 59 |

+

|

| 60 |

+

# disable guidance_scale by passing 0

|

| 61 |

+

image = pipe(prompt=prompt, num_inference_steps=4, guidance_scale=0).images[0]

|

| 62 |

+

```

|

| 63 |

+

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

### Inpainting

|

| 67 |

+

|

| 68 |

+

LCM-LoRA can be used for inpainting as well.

|

| 69 |

+

|

| 70 |

+

```python

|

| 71 |

+

import torch

|

| 72 |

+

from diffusers import AutoPipelineForInpainting, LCMScheduler

|

| 73 |

+

from diffusers.utils import load_image, make_image_grid

|

| 74 |

+

|

| 75 |

+

pipe = AutoPipelineForInpainting.from_pretrained(

|

| 76 |

+

"diffusers/stable-diffusion-xl-1.0-inpainting-0.1",

|

| 77 |

+

torch_dtype=torch.float16,

|

| 78 |

+

variant="fp16",

|

| 79 |

+

).to("cuda")

|

| 80 |

+

|

| 81 |

+

# set scheduler

|

| 82 |

+

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

| 83 |

+

|

| 84 |

+

# load LCM-LoRA

|

| 85 |

+

pipe.load_lora_weights("latent-consistency/lcm-lora-sdxl")

|

| 86 |

+

pipe.fuse_lora()

|

| 87 |

+

|

| 88 |

+

# load base and mask image

|

| 89 |

+

init_image = load_image("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/inpaint.png").resize((1024, 1024))

|

| 90 |

+

mask_image = load_image("https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/diffusers/inpaint_mask.png").resize((1024, 1024))

|

| 91 |

+

|

| 92 |

+

prompt = "a castle on top of a mountain, highly detailed, 8k"

|

| 93 |

+

generator = torch.manual_seed(42)

|

| 94 |

+

image = pipe(

|

| 95 |

+

prompt=prompt,

|

| 96 |

+

image=init_image,

|

| 97 |

+

mask_image=mask_image,

|

| 98 |

+

generator=generator,

|

| 99 |

+

num_inference_steps=5,

|

| 100 |

+

guidance_scale=4,

|

| 101 |

+

).images[0]

|

| 102 |

+

make_image_grid([init_image, mask_image, image], rows=1, cols=3)

|

| 103 |

+

```

|

| 104 |

+

|

| 105 |

+

|

| 106 |

+

|

| 107 |

+

|

| 108 |

+

## Combine with styled LoRAs

|

| 109 |

+

|

| 110 |

+

LCM-LoRA can be combined with other LoRAs to generate styled-images in very few steps (4-8). In the following example, we'll use the LCM-LoRA with the [papercut LoRA](TheLastBen/Papercut_SDXL).

|

| 111 |

+

To learn more about how to combine LoRAs, refer to [this guide](https://huggingface.co/docs/diffusers/tutorials/using_peft_for_inference#combine-multiple-adapters).

|

| 112 |

+

|

| 113 |

+

```python

|

| 114 |

+

import torch

|

| 115 |

+

from diffusers import DiffusionPipeline, LCMScheduler

|

| 116 |

+

|

| 117 |

+

pipe = DiffusionPipeline.from_pretrained(

|

| 118 |

+

"stabilityai/stable-diffusion-xl-base-1.0",

|

| 119 |

+

variant="fp16",

|

| 120 |

+

torch_dtype=torch.float16

|

| 121 |

+

).to("cuda")

|

| 122 |

+

|

| 123 |

+

# set scheduler

|

| 124 |

+

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

| 125 |

+

|

| 126 |

+

# load LoRAs

|

| 127 |

+

pipe.load_lora_weights("latent-consistency/lcm-lora-sdxl", adapter_name="lcm")

|

| 128 |

+

pipe.load_lora_weights("TheLastBen/Papercut_SDXL", weight_name="papercut.safetensors", adapter_name="papercut")

|

| 129 |

+

|

| 130 |

+

# Combine LoRAs

|

| 131 |

+

pipe.set_adapters(["lcm", "papercut"], adapter_weights=[1.0, 0.8])

|

| 132 |

+

|

| 133 |

+

prompt = "papercut, a cute fox"

|

| 134 |

+

generator = torch.manual_seed(0)

|

| 135 |

+

image = pipe(prompt, num_inference_steps=4, guidance_scale=1, generator=generator).images[0]

|

| 136 |

+

image

|

| 137 |

+

```

|

| 138 |

+

|

| 139 |

+

|

| 140 |

+

|

| 141 |

+

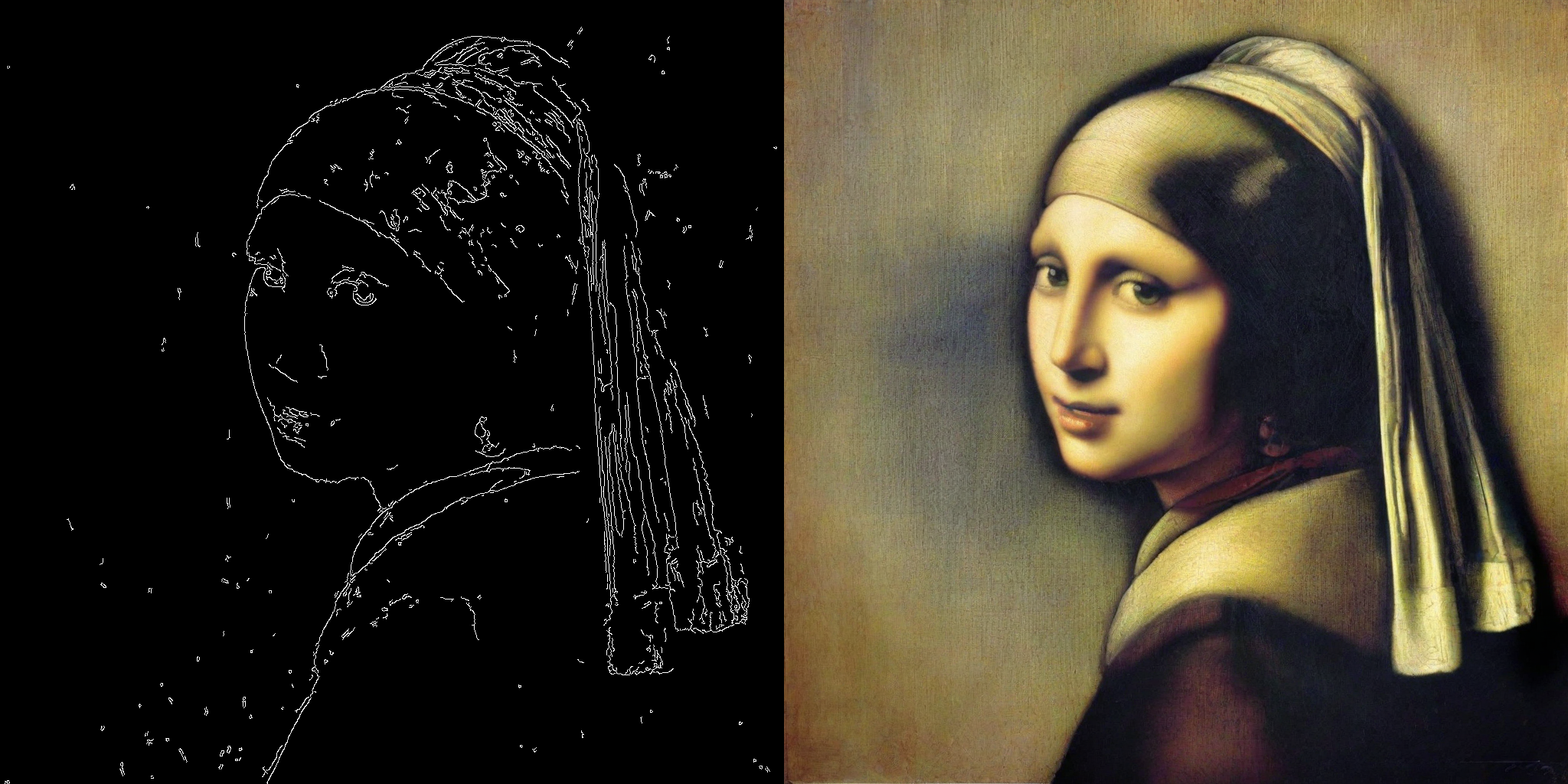

### ControlNet

|

| 142 |

+

|

| 143 |

+

```python

|

| 144 |

+

import torch

|

| 145 |

+

import cv2

|

| 146 |

+

import numpy as np

|

| 147 |

+

from PIL import Image

|

| 148 |

+

|

| 149 |

+

from diffusers import StableDiffusionXLControlNetPipeline, ControlNetModel, LCMScheduler

|

| 150 |

+

from diffusers.utils import load_image

|

| 151 |

+

|

| 152 |

+

image = load_image(

|

| 153 |

+

"https://hf.co/datasets/huggingface/documentation-images/resolve/main/diffusers/input_image_vermeer.png"

|

| 154 |

+

).resize((1024, 1024))

|

| 155 |

+

|

| 156 |

+

image = np.array(image)

|

| 157 |

+

|

| 158 |

+

low_threshold = 100

|

| 159 |

+

high_threshold = 200

|

| 160 |

+

|

| 161 |

+

image = cv2.Canny(image, low_threshold, high_threshold)

|

| 162 |

+

image = image[:, :, None]

|

| 163 |

+

image = np.concatenate([image, image, image], axis=2)

|

| 164 |

+

canny_image = Image.fromarray(image)

|

| 165 |

+

|

| 166 |

+

controlnet = ControlNetModel.from_pretrained("diffusers/controlnet-canny-sdxl-1.0-small", torch_dtype=torch.float16, variant="fp16")

|

| 167 |

+

pipe = StableDiffusionXLControlNetPipeline.from_pretrained(

|

| 168 |

+

"stabilityai/stable-diffusion-xl-base-1.0",

|

| 169 |

+

controlnet=controlnet,

|

| 170 |

+

torch_dtype=torch.float16,

|

| 171 |

+

safety_checker=None,

|

| 172 |

+

variant="fp16"

|

| 173 |

+

).to("cuda")

|

| 174 |

+

|

| 175 |

+

# set scheduler

|

| 176 |

+

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

| 177 |

+

|

| 178 |

+

# load LCM-LoRA

|

| 179 |

+

pipe.load_lora_weights("latent-consistency/lcm-lora-sdxl")

|

| 180 |

+

pipe.fuse_lora()

|

| 181 |

+

|

| 182 |

+

generator = torch.manual_seed(0)

|

| 183 |

+

image = pipe(

|

| 184 |

+

"picture of the mona lisa",

|

| 185 |

+

image=canny_image,

|

| 186 |

+

num_inference_steps=5,

|

| 187 |

+

guidance_scale=1.5,

|

| 188 |

+

controlnet_conditioning_scale=0.5,

|

| 189 |

+

cross_attention_kwargs={"scale": 1},

|

| 190 |

+

generator=generator,

|

| 191 |

+

).images[0]

|

| 192 |

+

make_image_grid([canny_image, image], rows=1, cols=2)

|

| 193 |

+

```

|

| 194 |

+

|

| 195 |

+

|

| 196 |

+

|

| 197 |

+

|

| 198 |

+

<Tip>

|

| 199 |

+

The inference parameters in this example might not work for all examples, so we recommend you to try different values for `num_inference_steps`, `guidance_scale`, `controlnet_conditioning_scale` and `cross_attention_kwargs` parameters and choose the best one.

|

| 200 |

+

</Tip>

|

| 201 |

+

|

| 202 |

+

### T2I Adapter

|

| 203 |

+

|

| 204 |

+

This example shows how to use the LCM-LoRA with the [Canny T2I-Adapter](TencentARC/t2i-adapter-canny-sdxl-1.0) and SDXL.

|

| 205 |

+

|

| 206 |

+

```python

|

| 207 |

+

import torch

|

| 208 |

+

import cv2

|

| 209 |

+

import numpy as np

|

| 210 |

+

from PIL import Image

|

| 211 |

+

|

| 212 |

+

from diffusers import StableDiffusionXLAdapterPipeline, T2IAdapter, LCMScheduler

|

| 213 |

+

from diffusers.utils import load_image, make_image_grid

|

| 214 |

+

|

| 215 |

+

# Prepare image

|

| 216 |

+

# Detect the canny map in low resolution to avoid high-frequency details

|

| 217 |

+

image = load_image(

|

| 218 |

+

"https://huggingface.co/Adapter/t2iadapter/resolve/main/figs_SDXLV1.0/org_canny.jpg"

|

| 219 |

+

).resize((384, 384))

|

| 220 |

+

|

| 221 |

+

image = np.array(image)

|

| 222 |

+

|

| 223 |

+

low_threshold = 100

|

| 224 |

+

high_threshold = 200

|

| 225 |

+

|

| 226 |

+

image = cv2.Canny(image, low_threshold, high_threshold)

|

| 227 |

+

image = image[:, :, None]

|

| 228 |

+

image = np.concatenate([image, image, image], axis=2)

|

| 229 |

+

canny_image = Image.fromarray(image).resize((1024, 1024))

|

| 230 |

+

|

| 231 |

+

# load adapter

|

| 232 |

+

adapter = T2IAdapter.from_pretrained("TencentARC/t2i-adapter-canny-sdxl-1.0", torch_dtype=torch.float16, varient="fp16").to("cuda")

|

| 233 |

+

|

| 234 |

+

pipe = StableDiffusionXLAdapterPipeline.from_pretrained(

|

| 235 |

+

"stabilityai/stable-diffusion-xl-base-1.0",

|

| 236 |

+

adapter=adapter,

|

| 237 |

+

torch_dtype=torch.float16,

|

| 238 |

+

variant="fp16",

|

| 239 |

+

).to("cuda")

|

| 240 |

+

|

| 241 |

+

# set scheduler

|

| 242 |

+

pipe.scheduler = LCMScheduler.from_config(pipe.scheduler.config)

|

| 243 |

+

|

| 244 |

+

# load LCM-LoRA

|

| 245 |

+

pipe.load_lora_weights("latent-consistency/lcm-lora-sdxl")

|

| 246 |

+

|

| 247 |

+

prompt = "Mystical fairy in real, magic, 4k picture, high quality"

|

| 248 |

+

negative_prompt = "extra digit, fewer digits, cropped, worst quality, low quality, glitch, deformed, mutated, ugly, disfigured"

|

| 249 |

+

|

| 250 |

+

generator = torch.manual_seed(0)

|

| 251 |

+

image = pipe(

|

| 252 |

+

prompt=prompt,

|

| 253 |

+

negative_prompt=negative_prompt,

|

| 254 |

+

image=canny_image,

|

| 255 |

+

num_inference_steps=4,

|

| 256 |

+

guidance_scale=1.5,

|

| 257 |

+

adapter_conditioning_scale=0.8,

|

| 258 |

+

adapter_conditioning_factor=1,

|

| 259 |

+

generator=generator,

|

| 260 |

+

).images[0]

|

| 261 |

+

make_image_grid([canny_image, image], rows=1, cols=2)

|

| 262 |

+

```

|

| 263 |

+

|

| 264 |

+

|

| 265 |

+

|

| 266 |

+

|

| 267 |

+

## Speed Benchmark

|

| 268 |

+

|

| 269 |

+

TODO

|

| 270 |

+

|

| 271 |

+

## Training

|

| 272 |

+

|

| 273 |

+

TODO

|

pytorch_lora_weights.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a764e6859b6e04047cd761c08ff0cee96413a8e004c9f07707530cd776b19141

|

| 3 |

+

size 393855224

|