LarFii

commited on

Commit

·

a25342b

1

Parent(s):

6e3b972

update insert custom kg

Browse files- Dockerfile +0 -56

- README.md +17 -53

- examples/insert_custom_kg.py +16 -18

- examples/lightrag_nvidia_demo.py +34 -25

- lightrag/__init__.py +1 -1

- lightrag/lightrag.py +36 -2

- lightrag/llm.py +12 -6

- lightrag/operate.py +3 -2

Dockerfile

DELETED

|

@@ -1,56 +0,0 @@

|

|

| 1 |

-

FROM debian:bullseye-slim

|

| 2 |

-

ENV JAVA_HOME=/opt/java/openjdk

|

| 3 |

-

COPY --from=eclipse-temurin:17 $JAVA_HOME $JAVA_HOME

|

| 4 |

-

ENV PATH="${JAVA_HOME}/bin:${PATH}" \

|

| 5 |

-

NEO4J_SHA256=7ce97bd9a4348af14df442f00b3dc5085b5983d6f03da643744838c7a1bc8ba7 \

|

| 6 |

-

NEO4J_TARBALL=neo4j-enterprise-5.24.2-unix.tar.gz \

|

| 7 |

-

NEO4J_EDITION=enterprise \

|

| 8 |

-

NEO4J_HOME="/var/lib/neo4j" \

|

| 9 |

-

LANG=C.UTF-8

|

| 10 |

-

ARG NEO4J_URI=https://dist.neo4j.org/neo4j-enterprise-5.24.2-unix.tar.gz

|

| 11 |

-

|

| 12 |

-

RUN addgroup --gid 7474 --system neo4j && adduser --uid 7474 --system --no-create-home --home "${NEO4J_HOME}" --ingroup neo4j neo4j

|

| 13 |

-

|

| 14 |

-

COPY ./local-package/* /startup/

|

| 15 |

-

|

| 16 |

-

RUN apt update \

|

| 17 |

-

&& apt-get install -y curl gcc git jq make procps tini wget \

|

| 18 |

-

&& curl --fail --silent --show-error --location --remote-name ${NEO4J_URI} \

|

| 19 |

-

&& echo "${NEO4J_SHA256} ${NEO4J_TARBALL}" | sha256sum -c --strict --quiet \

|

| 20 |

-

&& tar --extract --file ${NEO4J_TARBALL} --directory /var/lib \

|

| 21 |

-

&& mv /var/lib/neo4j-* "${NEO4J_HOME}" \

|

| 22 |

-

&& rm ${NEO4J_TARBALL} \

|

| 23 |

-

&& sed -i 's/Package Type:.*/Package Type: docker bullseye/' $NEO4J_HOME/packaging_info \

|

| 24 |

-

&& mv /startup/neo4j-admin-report.sh "${NEO4J_HOME}"/bin/neo4j-admin-report \

|

| 25 |

-

&& mv "${NEO4J_HOME}"/data /data \

|

| 26 |

-

&& mv "${NEO4J_HOME}"/logs /logs \

|

| 27 |

-

&& chown -R neo4j:neo4j /data \

|

| 28 |

-

&& chmod -R 777 /data \

|

| 29 |

-

&& chown -R neo4j:neo4j /logs \

|

| 30 |

-

&& chmod -R 777 /logs \

|

| 31 |

-

&& chown -R neo4j:neo4j "${NEO4J_HOME}" \

|

| 32 |

-

&& chmod -R 777 "${NEO4J_HOME}" \

|

| 33 |

-

&& chmod -R 755 "${NEO4J_HOME}/bin" \

|

| 34 |

-

&& ln -s /data "${NEO4J_HOME}"/data \

|

| 35 |

-

&& ln -s /logs "${NEO4J_HOME}"/logs \

|

| 36 |

-

&& git clone https://github.com/ncopa/su-exec.git \

|

| 37 |

-

&& cd su-exec \

|

| 38 |

-

&& git checkout 4c3bb42b093f14da70d8ab924b487ccfbb1397af \

|

| 39 |

-

&& echo d6c40440609a23483f12eb6295b5191e94baf08298a856bab6e15b10c3b82891 su-exec.c | sha256sum -c \

|

| 40 |

-

&& echo 2a87af245eb125aca9305a0b1025525ac80825590800f047419dc57bba36b334 Makefile | sha256sum -c \

|

| 41 |

-

&& make \

|

| 42 |

-

&& mv /su-exec/su-exec /usr/bin/su-exec \

|

| 43 |

-

&& apt-get -y purge --auto-remove curl gcc git make \

|

| 44 |

-

&& rm -rf /var/lib/apt/lists/* /su-exec

|

| 45 |

-

|

| 46 |

-

|

| 47 |

-

ENV PATH "${NEO4J_HOME}"/bin:$PATH

|

| 48 |

-

|

| 49 |

-

WORKDIR "${NEO4J_HOME}"

|

| 50 |

-

|

| 51 |

-

VOLUME /data /logs

|

| 52 |

-

|

| 53 |

-

EXPOSE 7474 7473 7687

|

| 54 |

-

|

| 55 |

-

ENTRYPOINT ["tini", "-g", "--", "/startup/docker-entrypoint.sh"]

|

| 56 |

-

CMD ["neo4j"]

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

README.md

CHANGED

|

@@ -42,9 +42,9 @@ This repository hosts the code of LightRAG. The structure of this code is based

|

|

| 42 |

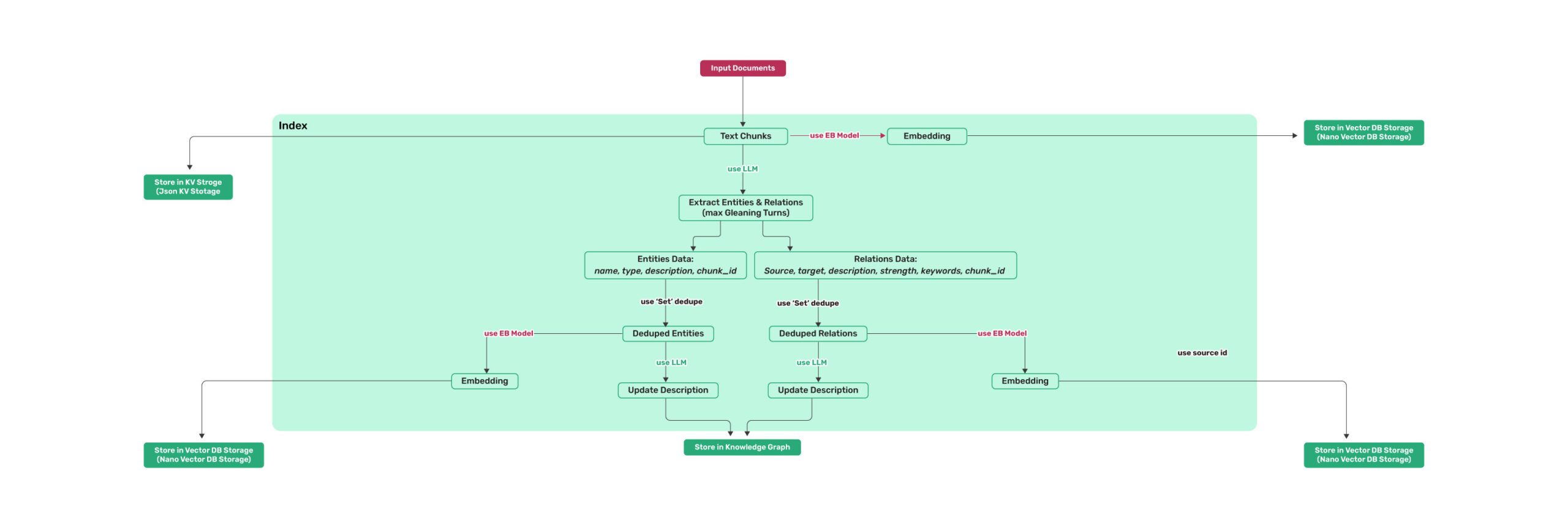

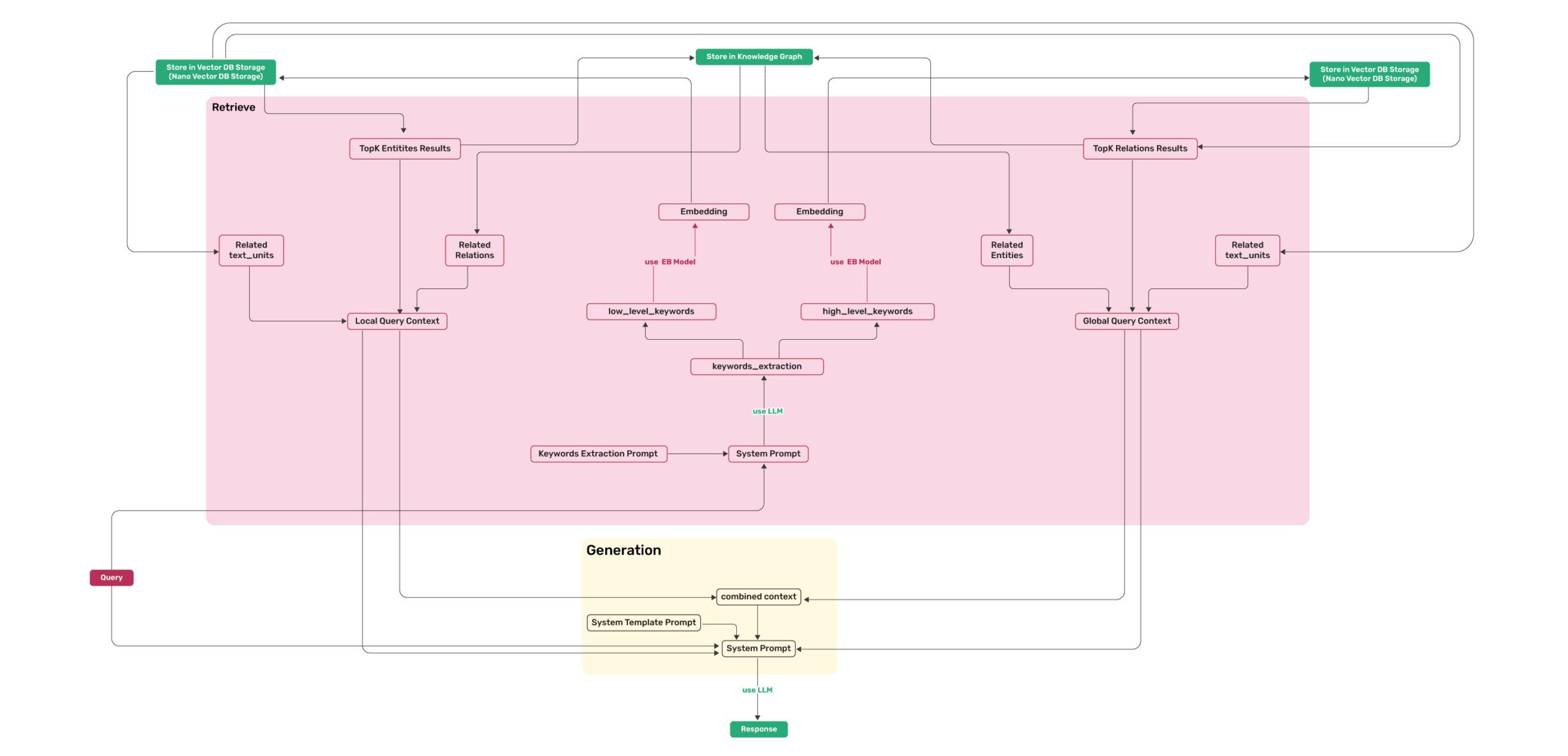

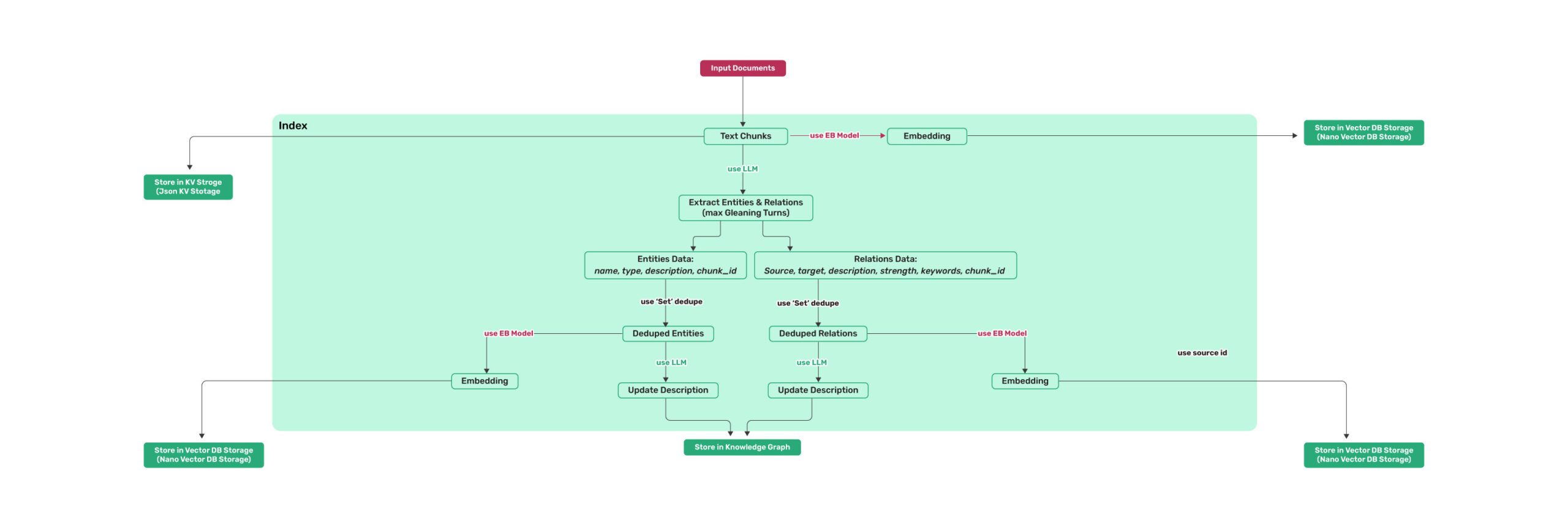

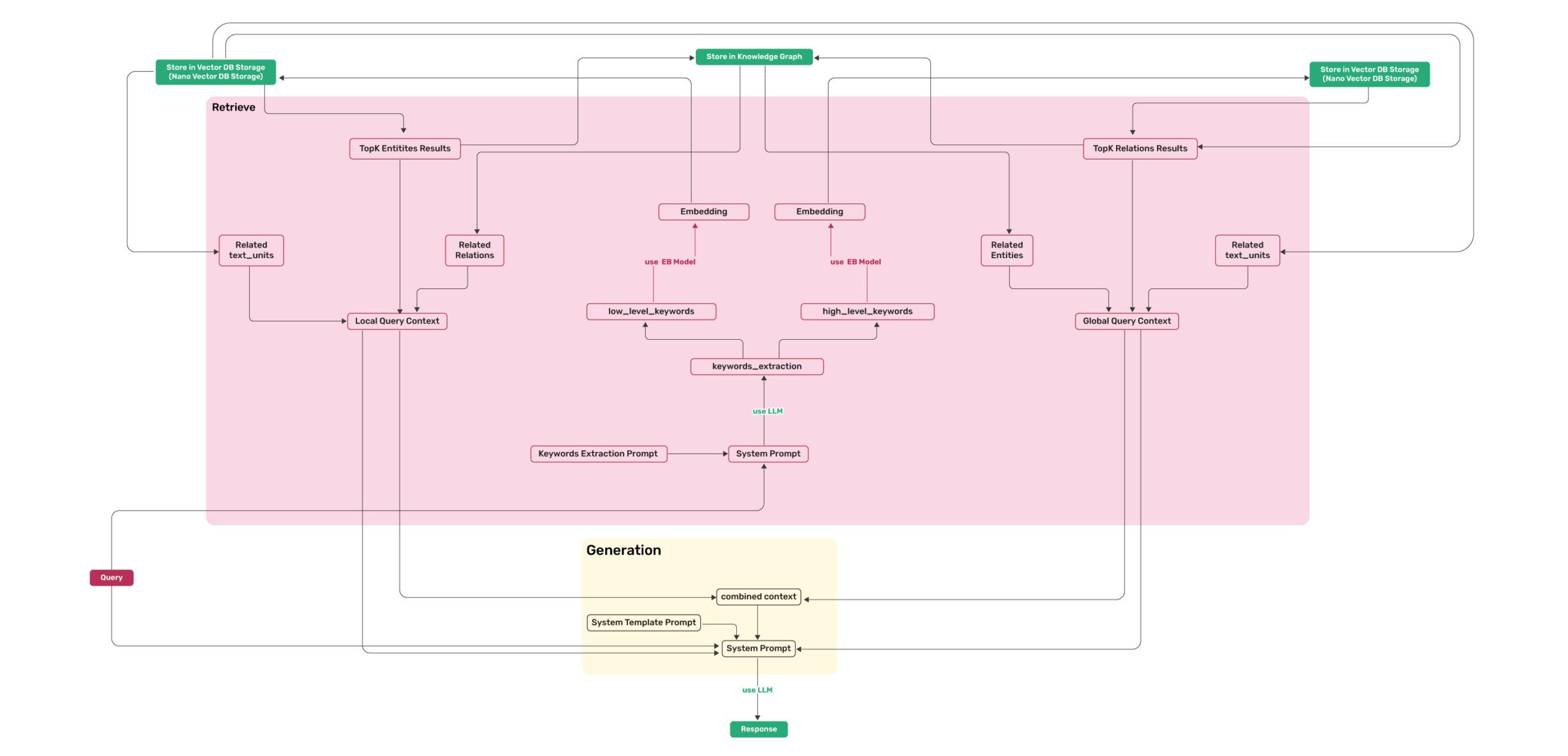

## Algorithm Flowchart

|

| 43 |

|

| 44 |

|

| 45 |

-

*Figure 1: LightRAG Indexing Flowchart*

|

| 46 |

|

| 47 |

-

*Figure 2: LightRAG Retrieval and Querying Flowchart*

|

| 48 |

|

| 49 |

## Install

|

| 50 |

|

|

@@ -364,7 +364,21 @@ custom_kg = {

|

|

| 364 |

"weight": 1.0,

|

| 365 |

"source_id": "Source1"

|

| 366 |

}

|

| 367 |

-

]

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 368 |

}

|

| 369 |

|

| 370 |

rag.insert_custom_kg(custom_kg)

|

|

@@ -947,56 +961,6 @@ def extract_queries(file_path):

|

|

| 947 |

```

|

| 948 |

</details>

|

| 949 |

|

| 950 |

-

## Code Structure

|

| 951 |

-

|

| 952 |

-

```python

|

| 953 |

-

.

|

| 954 |

-

├── examples

|

| 955 |

-

│ ├── batch_eval.py

|

| 956 |

-

│ ├── generate_query.py

|

| 957 |

-

│ ├── graph_visual_with_html.py

|

| 958 |

-

│ ├── graph_visual_with_neo4j.py

|

| 959 |

-

│ ├── lightrag_api_openai_compatible_demo.py

|

| 960 |

-

│ ├── lightrag_azure_openai_demo.py

|

| 961 |

-

│ ├── lightrag_bedrock_demo.py

|

| 962 |

-

│ ├── lightrag_hf_demo.py

|

| 963 |

-

│ ├── lightrag_lmdeploy_demo.py

|

| 964 |

-

│ ├── lightrag_ollama_demo.py

|

| 965 |

-

│ ├── lightrag_openai_compatible_demo.py

|

| 966 |

-

│ ├── lightrag_openai_demo.py

|

| 967 |

-

│ ├── lightrag_siliconcloud_demo.py

|

| 968 |

-

│ └── vram_management_demo.py

|

| 969 |

-

├── lightrag

|

| 970 |

-

│ ├── kg

|

| 971 |

-

│ │ ├── __init__.py

|

| 972 |

-

│ │ └── neo4j_impl.py

|

| 973 |

-

│ ├── __init__.py

|

| 974 |

-

│ ├── base.py

|

| 975 |

-

│ ├── lightrag.py

|

| 976 |

-

│ ├── llm.py

|

| 977 |

-

│ ├── operate.py

|

| 978 |

-

│ ├── prompt.py

|

| 979 |

-

│ ├── storage.py

|

| 980 |

-

│ └── utils.py

|

| 981 |

-

├── reproduce

|

| 982 |

-

│ ├── Step_0.py

|

| 983 |

-

│ ├── Step_1_openai_compatible.py

|

| 984 |

-

│ ├── Step_1.py

|

| 985 |

-

│ ├── Step_2.py

|

| 986 |

-

│ ├── Step_3_openai_compatible.py

|

| 987 |

-

│ └── Step_3.py

|

| 988 |

-

├── .gitignore

|

| 989 |

-

├── .pre-commit-config.yaml

|

| 990 |

-

├── Dockerfile

|

| 991 |

-

├── get_all_edges_nx.py

|

| 992 |

-

├── LICENSE

|

| 993 |

-

├── README.md

|

| 994 |

-

├── requirements.txt

|

| 995 |

-

├── setup.py

|

| 996 |

-

├── test_neo4j.py

|

| 997 |

-

└── test.py

|

| 998 |

-

```

|

| 999 |

-

|

| 1000 |

## Star History

|

| 1001 |

|

| 1002 |

<a href="https://star-history.com/#HKUDS/LightRAG&Date">

|

|

|

|

| 42 |

## Algorithm Flowchart

|

| 43 |

|

| 44 |

|

| 45 |

+

*Figure 1: LightRAG Indexing Flowchart - Img Caption : [Source](https://learnopencv.com/lightrag/)*

|

| 46 |

|

| 47 |

+

*Figure 2: LightRAG Retrieval and Querying Flowchart - Img Caption : [Source](https://learnopencv.com/lightrag/)*

|

| 48 |

|

| 49 |

## Install

|

| 50 |

|

|

|

|

| 364 |

"weight": 1.0,

|

| 365 |

"source_id": "Source1"

|

| 366 |

}

|

| 367 |

+

],

|

| 368 |

+

"chunks": [

|

| 369 |

+

{

|

| 370 |

+

"content": "ProductX, developed by CompanyA, has revolutionized the market with its cutting-edge features.",

|

| 371 |

+

"source_id": "Source1",

|

| 372 |

+

},

|

| 373 |

+

{

|

| 374 |

+

"content": "PersonA is a prominent researcher at UniversityB, focusing on artificial intelligence and machine learning.",

|

| 375 |

+

"source_id": "Source2",

|

| 376 |

+

},

|

| 377 |

+

{

|

| 378 |

+

"content": "None",

|

| 379 |

+

"source_id": "UNKNOWN",

|

| 380 |

+

},

|

| 381 |

+

],

|

| 382 |

}

|

| 383 |

|

| 384 |

rag.insert_custom_kg(custom_kg)

|

|

|

|

| 961 |

```

|

| 962 |

</details>

|

| 963 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 964 |

## Star History

|

| 965 |

|

| 966 |

<a href="https://star-history.com/#HKUDS/LightRAG&Date">

|

examples/insert_custom_kg.py

CHANGED

|

@@ -56,18 +56,6 @@ custom_kg = {

|

|

| 56 |

"description": "An annual technology conference held in CityC",

|

| 57 |

"source_id": "Source3",

|

| 58 |

},

|

| 59 |

-

{

|

| 60 |

-

"entity_name": "CompanyD",

|

| 61 |

-

"entity_type": "Organization",

|

| 62 |

-

"description": "A financial services company specializing in insurance",

|

| 63 |

-

"source_id": "Source4",

|

| 64 |

-

},

|

| 65 |

-

{

|

| 66 |

-

"entity_name": "ServiceZ",

|

| 67 |

-

"entity_type": "Service",

|

| 68 |

-

"description": "An insurance product offered by CompanyD",

|

| 69 |

-

"source_id": "Source4",

|

| 70 |

-

},

|

| 71 |

],

|

| 72 |

"relationships": [

|

| 73 |

{

|

|

@@ -94,13 +82,23 @@ custom_kg = {

|

|

| 94 |

"weight": 0.8,

|

| 95 |

"source_id": "Source3",

|

| 96 |

},

|

|

|

|

|

|

|

| 97 |

{

|

| 98 |

-

"

|

| 99 |

-

"

|

| 100 |

-

|

| 101 |

-

|

| 102 |

-

"

|

| 103 |

-

"source_id": "

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 104 |

},

|

| 105 |

],

|

| 106 |

}

|

|

|

|

| 56 |

"description": "An annual technology conference held in CityC",

|

| 57 |

"source_id": "Source3",

|

| 58 |

},

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 59 |

],

|

| 60 |

"relationships": [

|

| 61 |

{

|

|

|

|

| 82 |

"weight": 0.8,

|

| 83 |

"source_id": "Source3",

|

| 84 |

},

|

| 85 |

+

],

|

| 86 |

+

"chunks": [

|

| 87 |

{

|

| 88 |

+

"content": "ProductX, developed by CompanyA, has revolutionized the market with its cutting-edge features.",

|

| 89 |

+

"source_id": "Source1",

|

| 90 |

+

},

|

| 91 |

+

{

|

| 92 |

+

"content": "PersonA is a prominent researcher at UniversityB, focusing on artificial intelligence and machine learning.",

|

| 93 |

+

"source_id": "Source2",

|

| 94 |

+

},

|

| 95 |

+

{

|

| 96 |

+

"content": "EventY, held in CityC, attracts technology enthusiasts and companies from around the globe.",

|

| 97 |

+

"source_id": "Source3",

|

| 98 |

+

},

|

| 99 |

+

{

|

| 100 |

+

"content": "None",

|

| 101 |

+

"source_id": "UNKNOWN",

|

| 102 |

},

|

| 103 |

],

|

| 104 |

}

|

examples/lightrag_nvidia_demo.py

CHANGED

|

@@ -1,11 +1,14 @@

|

|

| 1 |

import os

|

| 2 |

import asyncio

|

| 3 |

from lightrag import LightRAG, QueryParam

|

| 4 |

-

from lightrag.llm import

|

|

|

|

|

|

|

|

|

|

| 5 |

from lightrag.utils import EmbeddingFunc

|

| 6 |

import numpy as np

|

| 7 |

|

| 8 |

-

#for custom llm_model_func

|

| 9 |

from lightrag.utils import locate_json_string_body_from_string

|

| 10 |

|

| 11 |

WORKING_DIR = "./dickens"

|

|

@@ -13,14 +16,15 @@ WORKING_DIR = "./dickens"

|

|

| 13 |

if not os.path.exists(WORKING_DIR):

|

| 14 |

os.mkdir(WORKING_DIR)

|

| 15 |

|

| 16 |

-

#some method to use your API key (choose one)

|

| 17 |

# NVIDIA_OPENAI_API_KEY = os.getenv("NVIDIA_OPENAI_API_KEY")

|

| 18 |

-

NVIDIA_OPENAI_API_KEY = "nvapi-xxxx"

|

| 19 |

|

| 20 |

# using pre-defined function for nvidia LLM API. OpenAI compatible

|

| 21 |

# llm_model_func = nvidia_openai_complete

|

| 22 |

|

| 23 |

-

|

|

|

|

| 24 |

async def llm_model_func(

|

| 25 |

prompt, system_prompt=None, history_messages=[], keyword_extraction=False, **kwargs

|

| 26 |

) -> str:

|

|

@@ -37,36 +41,41 @@ async def llm_model_func(

|

|

| 37 |

return locate_json_string_body_from_string(result)

|

| 38 |

return result

|

| 39 |

|

| 40 |

-

|

|

|

|

| 41 |

nvidia_embed_model = "nvidia/nv-embedqa-e5-v5"

|

|

|

|

|

|

|

| 42 |

async def indexing_embedding_func(texts: list[str]) -> np.ndarray:

|

| 43 |

return await nvidia_openai_embedding(

|

| 44 |

texts,

|

| 45 |

-

model

|

| 46 |

# model="nvidia/llama-3.2-nv-embedqa-1b-v1",

|

| 47 |

api_key=NVIDIA_OPENAI_API_KEY,

|

| 48 |

base_url="https://integrate.api.nvidia.com/v1",

|

| 49 |

-

input_type

|

| 50 |

-

trunc

|

| 51 |

-

encode

|

| 52 |

)

|

| 53 |

|

|

|

|

| 54 |

async def query_embedding_func(texts: list[str]) -> np.ndarray:

|

| 55 |

return await nvidia_openai_embedding(

|

| 56 |

texts,

|

| 57 |

-

model

|

| 58 |

# model="nvidia/llama-3.2-nv-embedqa-1b-v1",

|

| 59 |

api_key=NVIDIA_OPENAI_API_KEY,

|

| 60 |

base_url="https://integrate.api.nvidia.com/v1",

|

| 61 |

-

input_type

|

| 62 |

-

trunc

|

| 63 |

-

encode

|

| 64 |

)

|

| 65 |

|

| 66 |

-

|

|

|

|

| 67 |

async def get_embedding_dim():

|

| 68 |

test_text = ["This is a test sentence."]

|

| 69 |

-

embedding = await indexing_embedding_func(test_text)

|

| 70 |

embedding_dim = embedding.shape[1]

|

| 71 |

return embedding_dim

|

| 72 |

|

|

@@ -88,29 +97,29 @@ async def main():

|

|

| 88 |

embedding_dimension = await get_embedding_dim()

|

| 89 |

print(f"Detected embedding dimension: {embedding_dimension}")

|

| 90 |

|

| 91 |

-

#lightRAG class during indexing

|

| 92 |

rag = LightRAG(

|

| 93 |

working_dir=WORKING_DIR,

|

| 94 |

llm_model_func=llm_model_func,

|

| 95 |

-

# llm_model_name="meta/llama3-70b-instruct", #un comment if

|

| 96 |

embedding_func=EmbeddingFunc(

|

| 97 |

embedding_dim=embedding_dimension,

|

| 98 |

-

max_token_size=512,

|

| 99 |

-

#so truncate (trunc) parameter on embedding_func will handle it and try to examine the tokenizer used in LightRAG

|

| 100 |

-

#so you can adjust to be able to fit the NVIDIA model (future work)

|

| 101 |

func=indexing_embedding_func,

|

| 102 |

),

|

| 103 |

)

|

| 104 |

-

|

| 105 |

-

#reading file

|

| 106 |

with open("./book.txt", "r", encoding="utf-8") as f:

|

| 107 |

await rag.ainsert(f.read())

|

| 108 |

|

| 109 |

-

#redefine rag to change embedding into query type

|

| 110 |

rag = LightRAG(

|

| 111 |

working_dir=WORKING_DIR,

|

| 112 |

llm_model_func=llm_model_func,

|

| 113 |

-

# llm_model_name="meta/llama3-70b-instruct", #un comment if

|

| 114 |

embedding_func=EmbeddingFunc(

|

| 115 |

embedding_dim=embedding_dimension,

|

| 116 |

max_token_size=512,

|

|

|

|

| 1 |

import os

|

| 2 |

import asyncio

|

| 3 |

from lightrag import LightRAG, QueryParam

|

| 4 |

+

from lightrag.llm import (

|

| 5 |

+

openai_complete_if_cache,

|

| 6 |

+

nvidia_openai_embedding,

|

| 7 |

+

)

|

| 8 |

from lightrag.utils import EmbeddingFunc

|

| 9 |

import numpy as np

|

| 10 |

|

| 11 |

+

# for custom llm_model_func

|

| 12 |

from lightrag.utils import locate_json_string_body_from_string

|

| 13 |

|

| 14 |

WORKING_DIR = "./dickens"

|

|

|

|

| 16 |

if not os.path.exists(WORKING_DIR):

|

| 17 |

os.mkdir(WORKING_DIR)

|

| 18 |

|

| 19 |

+

# some method to use your API key (choose one)

|

| 20 |

# NVIDIA_OPENAI_API_KEY = os.getenv("NVIDIA_OPENAI_API_KEY")

|

| 21 |

+

NVIDIA_OPENAI_API_KEY = "nvapi-xxxx" # your api key

|

| 22 |

|

| 23 |

# using pre-defined function for nvidia LLM API. OpenAI compatible

|

| 24 |

# llm_model_func = nvidia_openai_complete

|

| 25 |

|

| 26 |

+

|

| 27 |

+

# If you trying to make custom llm_model_func to use llm model on NVIDIA API like other example:

|

| 28 |

async def llm_model_func(

|

| 29 |

prompt, system_prompt=None, history_messages=[], keyword_extraction=False, **kwargs

|

| 30 |

) -> str:

|

|

|

|

| 41 |

return locate_json_string_body_from_string(result)

|

| 42 |

return result

|

| 43 |

|

| 44 |

+

|

| 45 |

+

# custom embedding

|

| 46 |

nvidia_embed_model = "nvidia/nv-embedqa-e5-v5"

|

| 47 |

+

|

| 48 |

+

|

| 49 |

async def indexing_embedding_func(texts: list[str]) -> np.ndarray:

|

| 50 |

return await nvidia_openai_embedding(

|

| 51 |

texts,

|

| 52 |

+

model=nvidia_embed_model, # maximum 512 token

|

| 53 |

# model="nvidia/llama-3.2-nv-embedqa-1b-v1",

|

| 54 |

api_key=NVIDIA_OPENAI_API_KEY,

|

| 55 |

base_url="https://integrate.api.nvidia.com/v1",

|

| 56 |

+

input_type="passage",

|

| 57 |

+

trunc="END", # handling on server side if input token is longer than maximum token

|

| 58 |

+

encode="float",

|

| 59 |

)

|

| 60 |

|

| 61 |

+

|

| 62 |

async def query_embedding_func(texts: list[str]) -> np.ndarray:

|

| 63 |

return await nvidia_openai_embedding(

|

| 64 |

texts,

|

| 65 |

+

model=nvidia_embed_model, # maximum 512 token

|

| 66 |

# model="nvidia/llama-3.2-nv-embedqa-1b-v1",

|

| 67 |

api_key=NVIDIA_OPENAI_API_KEY,

|

| 68 |

base_url="https://integrate.api.nvidia.com/v1",

|

| 69 |

+

input_type="query",

|

| 70 |

+

trunc="END", # handling on server side if input token is longer than maximum token

|

| 71 |

+

encode="float",

|

| 72 |

)

|

| 73 |

|

| 74 |

+

|

| 75 |

+

# dimension are same

|

| 76 |

async def get_embedding_dim():

|

| 77 |

test_text = ["This is a test sentence."]

|

| 78 |

+

embedding = await indexing_embedding_func(test_text)

|

| 79 |

embedding_dim = embedding.shape[1]

|

| 80 |

return embedding_dim

|

| 81 |

|

|

|

|

| 97 |

embedding_dimension = await get_embedding_dim()

|

| 98 |

print(f"Detected embedding dimension: {embedding_dimension}")

|

| 99 |

|

| 100 |

+

# lightRAG class during indexing

|

| 101 |

rag = LightRAG(

|

| 102 |

working_dir=WORKING_DIR,

|

| 103 |

llm_model_func=llm_model_func,

|

| 104 |

+

# llm_model_name="meta/llama3-70b-instruct", #un comment if

|

| 105 |

embedding_func=EmbeddingFunc(

|

| 106 |

embedding_dim=embedding_dimension,

|

| 107 |

+

max_token_size=512, # maximum token size, somehow it's still exceed maximum number of token

|

| 108 |

+

# so truncate (trunc) parameter on embedding_func will handle it and try to examine the tokenizer used in LightRAG

|

| 109 |

+

# so you can adjust to be able to fit the NVIDIA model (future work)

|

| 110 |

func=indexing_embedding_func,

|

| 111 |

),

|

| 112 |

)

|

| 113 |

+

|

| 114 |

+

# reading file

|

| 115 |

with open("./book.txt", "r", encoding="utf-8") as f:

|

| 116 |

await rag.ainsert(f.read())

|

| 117 |

|

| 118 |

+

# redefine rag to change embedding into query type

|

| 119 |

rag = LightRAG(

|

| 120 |

working_dir=WORKING_DIR,

|

| 121 |

llm_model_func=llm_model_func,

|

| 122 |

+

# llm_model_name="meta/llama3-70b-instruct", #un comment if

|

| 123 |

embedding_func=EmbeddingFunc(

|

| 124 |

embedding_dim=embedding_dimension,

|

| 125 |

max_token_size=512,

|

lightrag/__init__.py

CHANGED

|

@@ -1,5 +1,5 @@

|

|

| 1 |

from .lightrag import LightRAG as LightRAG, QueryParam as QueryParam

|

| 2 |

|

| 3 |

-

__version__ = "1.0.

|

| 4 |

__author__ = "Zirui Guo"

|

| 5 |

__url__ = "https://github.com/HKUDS/LightRAG"

|

|

|

|

| 1 |

from .lightrag import LightRAG as LightRAG, QueryParam as QueryParam

|

| 2 |

|

| 3 |

+

__version__ = "1.0.3"

|

| 4 |

__author__ = "Zirui Guo"

|

| 5 |

__url__ = "https://github.com/HKUDS/LightRAG"

|

lightrag/lightrag.py

CHANGED

|

@@ -329,13 +329,39 @@ class LightRAG:

|

|

| 329 |

async def ainsert_custom_kg(self, custom_kg: dict):

|

| 330 |

update_storage = False

|

| 331 |

try:

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 332 |

# Insert entities into knowledge graph

|

| 333 |

all_entities_data = []

|

| 334 |

for entity_data in custom_kg.get("entities", []):

|

| 335 |

entity_name = f'"{entity_data["entity_name"].upper()}"'

|

| 336 |

entity_type = entity_data.get("entity_type", "UNKNOWN")

|

| 337 |

description = entity_data.get("description", "No description provided")

|

| 338 |

-

source_id = entity_data["source_id"]

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 339 |

|

| 340 |

# Prepare node data

|

| 341 |

node_data = {

|

|

@@ -359,7 +385,15 @@ class LightRAG:

|

|

| 359 |

description = relationship_data["description"]

|

| 360 |

keywords = relationship_data["keywords"]

|

| 361 |

weight = relationship_data.get("weight", 1.0)

|

| 362 |

-

source_id = relationship_data["source_id"]

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 363 |

|

| 364 |

# Check if nodes exist in the knowledge graph

|

| 365 |

for need_insert_id in [src_id, tgt_id]:

|

|

|

|

| 329 |

async def ainsert_custom_kg(self, custom_kg: dict):

|

| 330 |

update_storage = False

|

| 331 |

try:

|

| 332 |

+

# Insert chunks into vector storage

|

| 333 |

+

all_chunks_data = {}

|

| 334 |

+

chunk_to_source_map = {}

|

| 335 |

+

for chunk_data in custom_kg.get("chunks", []):

|

| 336 |

+

chunk_content = chunk_data["content"]

|

| 337 |

+

source_id = chunk_data["source_id"]

|

| 338 |

+

chunk_id = compute_mdhash_id(chunk_content.strip(), prefix="chunk-")

|

| 339 |

+

|

| 340 |

+

chunk_entry = {"content": chunk_content.strip(), "source_id": source_id}

|

| 341 |

+

all_chunks_data[chunk_id] = chunk_entry

|

| 342 |

+

chunk_to_source_map[source_id] = chunk_id

|

| 343 |

+

update_storage = True

|

| 344 |

+

|

| 345 |

+

if self.chunks_vdb is not None and all_chunks_data:

|

| 346 |

+

await self.chunks_vdb.upsert(all_chunks_data)

|

| 347 |

+

if self.text_chunks is not None and all_chunks_data:

|

| 348 |

+

await self.text_chunks.upsert(all_chunks_data)

|

| 349 |

+

|

| 350 |

# Insert entities into knowledge graph

|

| 351 |

all_entities_data = []

|

| 352 |

for entity_data in custom_kg.get("entities", []):

|

| 353 |

entity_name = f'"{entity_data["entity_name"].upper()}"'

|

| 354 |

entity_type = entity_data.get("entity_type", "UNKNOWN")

|

| 355 |

description = entity_data.get("description", "No description provided")

|

| 356 |

+

# source_id = entity_data["source_id"]

|

| 357 |

+

source_chunk_id = entity_data.get("source_id", "UNKNOWN")

|

| 358 |

+

source_id = chunk_to_source_map.get(source_chunk_id, "UNKNOWN")

|

| 359 |

+

|

| 360 |

+

# Log if source_id is UNKNOWN

|

| 361 |

+

if source_id == "UNKNOWN":

|

| 362 |

+

logger.warning(

|

| 363 |

+

f"Entity '{entity_name}' has an UNKNOWN source_id. Please check the source mapping."

|

| 364 |

+

)

|

| 365 |

|

| 366 |

# Prepare node data

|

| 367 |

node_data = {

|

|

|

|

| 385 |

description = relationship_data["description"]

|

| 386 |

keywords = relationship_data["keywords"]

|

| 387 |

weight = relationship_data.get("weight", 1.0)

|

| 388 |

+

# source_id = relationship_data["source_id"]

|

| 389 |

+

source_chunk_id = relationship_data.get("source_id", "UNKNOWN")

|

| 390 |

+

source_id = chunk_to_source_map.get(source_chunk_id, "UNKNOWN")

|

| 391 |

+

|

| 392 |

+

# Log if source_id is UNKNOWN

|

| 393 |

+

if source_id == "UNKNOWN":

|

| 394 |

+

logger.warning(

|

| 395 |

+

f"Relationship from '{src_id}' to '{tgt_id}' has an UNKNOWN source_id. Please check the source mapping."

|

| 396 |

+

)

|

| 397 |

|

| 398 |

# Check if nodes exist in the knowledge graph

|

| 399 |

for need_insert_id in [src_id, tgt_id]:

|

lightrag/llm.py

CHANGED

|

@@ -502,11 +502,12 @@ async def gpt_4o_mini_complete(

|

|

| 502 |

**kwargs,

|

| 503 |

)

|

| 504 |

|

|

|

|

| 505 |

async def nvidia_openai_complete(

|

| 506 |

prompt, system_prompt=None, history_messages=[], keyword_extraction=False, **kwargs

|

| 507 |

) -> str:

|

| 508 |

result = await openai_complete_if_cache(

|

| 509 |

-

"nvidia/llama-3.1-nemotron-70b-instruct",

|

| 510 |

prompt,

|

| 511 |

system_prompt=system_prompt,

|

| 512 |

history_messages=history_messages,

|

|

@@ -517,6 +518,7 @@ async def nvidia_openai_complete(

|

|

| 517 |

return locate_json_string_body_from_string(result)

|

| 518 |

return result

|

| 519 |

|

|

|

|

| 520 |

async def azure_openai_complete(

|

| 521 |

prompt, system_prompt=None, history_messages=[], keyword_extraction=False, **kwargs

|

| 522 |

) -> str:

|

|

@@ -610,12 +612,12 @@ async def openai_embedding(

|

|

| 610 |

)

|

| 611 |

async def nvidia_openai_embedding(

|

| 612 |

texts: list[str],

|

| 613 |

-

model: str = "nvidia/llama-3.2-nv-embedqa-1b-v1",

|

| 614 |

base_url: str = "https://integrate.api.nvidia.com/v1",

|

| 615 |

api_key: str = None,

|

| 616 |

-

input_type: str = "passage",

|

| 617 |

-

trunc: str = "NONE",

|

| 618 |

-

encode: str = "float"

|

| 619 |

) -> np.ndarray:

|

| 620 |

if api_key:

|

| 621 |

os.environ["OPENAI_API_KEY"] = api_key

|

|

@@ -624,10 +626,14 @@ async def nvidia_openai_embedding(

|

|

| 624 |

AsyncOpenAI() if base_url is None else AsyncOpenAI(base_url=base_url)

|

| 625 |

)

|

| 626 |

response = await openai_async_client.embeddings.create(

|

| 627 |

-

model=model,

|

|

|

|

|

|

|

|

|

|

| 628 |

)

|

| 629 |

return np.array([dp.embedding for dp in response.data])

|

| 630 |

|

|

|

|

| 631 |

@wrap_embedding_func_with_attrs(embedding_dim=1536, max_token_size=8191)

|

| 632 |

@retry(

|

| 633 |

stop=stop_after_attempt(3),

|

|

|

|

| 502 |

**kwargs,

|

| 503 |

)

|

| 504 |

|

| 505 |

+

|

| 506 |

async def nvidia_openai_complete(

|

| 507 |

prompt, system_prompt=None, history_messages=[], keyword_extraction=False, **kwargs

|

| 508 |

) -> str:

|

| 509 |

result = await openai_complete_if_cache(

|

| 510 |

+

"nvidia/llama-3.1-nemotron-70b-instruct", # context length 128k

|

| 511 |

prompt,

|

| 512 |

system_prompt=system_prompt,

|

| 513 |

history_messages=history_messages,

|

|

|

|

| 518 |

return locate_json_string_body_from_string(result)

|

| 519 |

return result

|

| 520 |

|

| 521 |

+

|

| 522 |

async def azure_openai_complete(

|

| 523 |

prompt, system_prompt=None, history_messages=[], keyword_extraction=False, **kwargs

|

| 524 |

) -> str:

|

|

|

|

| 612 |

)

|

| 613 |

async def nvidia_openai_embedding(

|

| 614 |

texts: list[str],

|

| 615 |

+

model: str = "nvidia/llama-3.2-nv-embedqa-1b-v1", # refer to https://build.nvidia.com/nim?filters=usecase%3Ausecase_text_to_embedding

|

| 616 |

base_url: str = "https://integrate.api.nvidia.com/v1",

|

| 617 |

api_key: str = None,

|

| 618 |

+

input_type: str = "passage", # query for retrieval, passage for embedding

|

| 619 |

+

trunc: str = "NONE", # NONE or START or END

|

| 620 |

+

encode: str = "float", # float or base64

|

| 621 |

) -> np.ndarray:

|

| 622 |

if api_key:

|

| 623 |

os.environ["OPENAI_API_KEY"] = api_key

|

|

|

|

| 626 |

AsyncOpenAI() if base_url is None else AsyncOpenAI(base_url=base_url)

|

| 627 |

)

|

| 628 |

response = await openai_async_client.embeddings.create(

|

| 629 |

+

model=model,

|

| 630 |

+

input=texts,

|

| 631 |

+

encoding_format=encode,

|

| 632 |

+

extra_body={"input_type": input_type, "truncate": trunc},

|

| 633 |

)

|

| 634 |

return np.array([dp.embedding for dp in response.data])

|

| 635 |

|

| 636 |

+

|

| 637 |

@wrap_embedding_func_with_attrs(embedding_dim=1536, max_token_size=8191)

|

| 638 |

@retry(

|

| 639 |

stop=stop_after_attempt(3),

|

lightrag/operate.py

CHANGED

|

@@ -297,7 +297,9 @@ async def extract_entities(

|

|

| 297 |

chunk_dp = chunk_key_dp[1]

|

| 298 |

content = chunk_dp["content"]

|

| 299 |

# hint_prompt = entity_extract_prompt.format(**context_base, input_text=content)

|

| 300 |

-

hint_prompt = entity_extract_prompt.format(

|

|

|

|

|

|

|

| 301 |

|

| 302 |

final_result = await use_llm_func(hint_prompt)

|

| 303 |

history = pack_user_ass_to_openai_messages(hint_prompt, final_result)

|

|

@@ -949,7 +951,6 @@ async def _find_related_text_unit_from_relationships(

|

|

| 949 |

split_string_by_multi_markers(dp["source_id"], [GRAPH_FIELD_SEP])

|

| 950 |

for dp in edge_datas

|

| 951 |

]

|

| 952 |

-

|

| 953 |

all_text_units_lookup = {}

|

| 954 |

|

| 955 |

for index, unit_list in enumerate(text_units):

|

|

|

|

| 297 |

chunk_dp = chunk_key_dp[1]

|

| 298 |

content = chunk_dp["content"]

|

| 299 |

# hint_prompt = entity_extract_prompt.format(**context_base, input_text=content)

|

| 300 |

+

hint_prompt = entity_extract_prompt.format(

|

| 301 |

+

**context_base, input_text="{input_text}"

|

| 302 |

+

).format(**context_base, input_text=content)

|

| 303 |

|

| 304 |

final_result = await use_llm_func(hint_prompt)

|

| 305 |

history = pack_user_ass_to_openai_messages(hint_prompt, final_result)

|

|

|

|

| 951 |

split_string_by_multi_markers(dp["source_id"], [GRAPH_FIELD_SEP])

|

| 952 |

for dp in edge_datas

|

| 953 |

]

|

|

|

|

| 954 |

all_text_units_lookup = {}

|

| 955 |

|

| 956 |

for index, unit_list in enumerate(text_units):

|