EEGInceptionERP

EEG Inception for ERP-based from Santamaria-Vazquez et al (2020) [santamaria2020].

Architecture-only repository. Documents the

braindecode.models.EEGInceptionERPclass. No pretrained weights are distributed here. Instantiate the model and train it on your own data.

Quick start

pip install braindecode

from braindecode.models import EEGInceptionERP

model = EEGInceptionERP(

n_chans=22,

sfreq=250,

input_window_seconds=4.0,

n_outputs=4,

)

The signal-shape arguments above are illustrative defaults — adjust to match your recording.

Documentation

- Full API reference: https://braindecode.org/stable/generated/braindecode.models.EEGInceptionERP.html

- Interactive browser (live instantiation, parameter counts): https://huggingface.co/spaces/braindecode/model-explorer

- Source on GitHub: https://github.com/braindecode/braindecode/blob/master/braindecode/models/eeginception_erp.py#L15

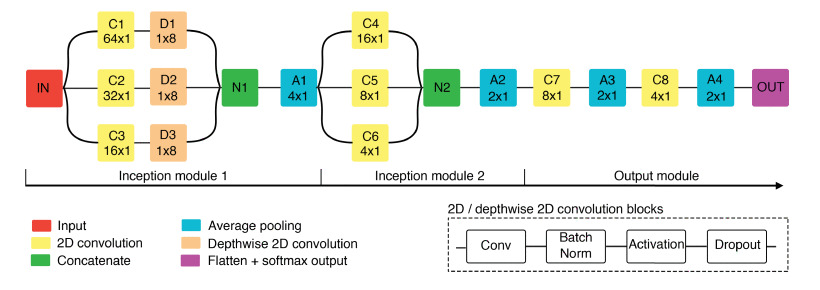

Architecture

Parameters

| Parameter | Type | Description |

|---|---|---|

n_times |

int, optional | Size of the input, in number of samples. Set to 128 (1s) as in [santamaria2020]. |

sfreq |

float, optional | EEG sampling frequency. Defaults to 128 as in [santamaria2020]. |

drop_prob |

float, optional | Dropout rate inside all the network. Defaults to 0.5 as in [santamaria2020]. |

scales_samples_s: list(float), optional |

— | Windows for inception block. Temporal scale (s) of the convolutions on each Inception module. This parameter determines the kernel sizes of the filters. Defaults to 0.5, 0.25, 0.125 seconds, as in [santamaria2020]. |

n_filters |

int, optional | Initial number of convolutional filters. Defaults to 8 as in [santamaria2020]. |

activation: nn.Module, optional |

— | Activation function. Defaults to ELU activation as in [santamaria2020]. |

batch_norm_alpha: float, optional |

— | Momentum for BatchNorm2d. Defaults to 0.01. |

depth_multiplier: int, optional |

— | Depth multiplier for the depthwise convolution. Defaults to 2 as in [santamaria2020]. |

pooling_sizes: list(int), optional |

— | Pooling sizes for the inception blocks. Defaults to 4, 2, 2 and 2, as in [santamaria2020]. |

References

- Santamaria-Vazquez, E., Martinez-Cagigal, V., Vaquerizo-Villar, F., & Hornero, R. (2020). EEG-inception: A novel deep convolutional neural network for assistive ERP-based brain-computer interfaces. IEEE Transactions on Neural Systems and Rehabilitation Engineering , v. 28. Online: http://dx.doi.org/10.1109/TNSRE.2020.3048106

- Grifcc. Implementation of the EEGInception in torch (2022). Online: https://github.com/Grifcc/EEG/

- Wei, X., Faisal, A.A., Grosse-Wentrup, M., Gramfort, A., Chevallier, S., Jayaram, V., Jeunet, C., Bakas, S., Ludwig, S., Barmpas, K., Bahri, M., Panagakis, Y., Laskaris, N., Adamos, D.A., Zafeiriou, S., Duong, W.C., Gordon, S.M., Lawhern, V.J., Śliwowski, M., Rouanne, V. & Tempczyk, P. (2022). 2021 BEETL Competition: Advancing Transfer Learning for Subject Independence & Heterogeneous EEG Data Sets. Proceedings of the NeurIPS 2021 Competitions and Demonstrations Track, in Proceedings of Machine Learning Research 176:205-219 Available from https://proceedings.mlr.press/v176/wei22a.html.

Citation

Cite the original architecture paper (see References above) and braindecode:

@article{aristimunha2025braindecode,

title = {Braindecode: a deep learning library for raw electrophysiological data},

author = {Aristimunha, Bruno and others},

journal = {Zenodo},

year = {2025},

doi = {10.5281/zenodo.17699192},

}

License

BSD-3-Clause for the model code (matching braindecode). Pretraining-derived weights, if you fine-tune from a checkpoint, inherit the licence of that checkpoint and its training corpus.