The full dataset viewer is not available (click to read why). Only showing a preview of the rows.

Error code: DatasetGenerationError

Exception: TypeError

Message: Couldn't cast array of type

struct<edge_max_z: double, edge_width: double, boundary_dx: double, boundary_dense_n: int64, interior_num: struct<front: int64, back: int64>, hood_opt_cfg: struct<final_z: double, y_offset: double, z_count: int64, rc: double, kc: double, cutoff_r: double, damping: double, xyzc: list<item: double>>, l_corner: list<item: double>, r_corner: list<item: double>, l_leg_o: list<item: double>, r_leg_o: list<item: double>, l_leg_i: list<item: double>, r_leg_i: list<item: double>, crotch: list<item: double>, top_ctr_f: list<item: double>, top_ctr_b: list<item: double>, l_tc_co: list<item: null>, r_tc_co: list<item: null>, l_co_lo: list<item: list<item: double>>, r_co_lo: list<item: list<item: double>>, l_lo_li: list<item: null>, r_lo_li: list<item: null>, l_li_cr: list<item: list<item: double>>, r_li_cr: list<item: list<item: double>>>

to

{'edge_max_z': Value('float64'), 'edge_width': Value('float64'), 'boundary_dx': Value('float64'), 'boundary_dense_n': Value('int64'), 'interior_num': {'front': Value('int64'), 'back': Value('int64'), 'leftf': Value('int64'), 'leftb': Value('int64'), 'rightf': Value('int64'), 'rightb': Value('int64'), 'hood': Value('int64')}, 'hood_opt_cfg': {'final_z': Value('float64'), 'y_offset': Value('float64'), 'z_count': Value('int64'), 'rc': Value('float64'), 'kc': Value('float64'), 'cutoff_r': Value('float64'), 'damping': Value('float64'), 'xyzc': List(Value('float64'))}, 'l_collar': List(Value('float64')), 'r_collar': List(Value('float64')), 'neck_b': List(Value('float64')), 'neck_f': List(Value('float64')), 'l_shoulder': List(Value('float64')), 'r_shoulder': List(Value('float64')), 'l_armpit': List(Value('float64')), 'r_armpit': List(Value('float64')), 'l_corner': List(Value('float64')), 'r_corner': List(Value('float64')), 'spine_bottom_f': List(Value('float64')), 'spine_bottom_b': List(Value('float64')), 'l_sleeve_top': List(Value('float64')), 'r_sleeve_top': List(Value('float64')), 'l_sleeve_bottom': List(Value('float64')), 'r_sleeve_bottom': List(Value('float64')), 'l_nf_cl': List(List(Value('float64'))), 'r_nf_cl': List(List(Value('float64'))), 'l_nb_cl': List(List(Value('float64'))), 'r_nb_cl': List(List(Value('float64'))), 'l_cl_sh': List(List(Value('float64'))), 'r_cl_sh': List(List(Value('float64'))), 'l_sh_st': List(Value('null')), 'r_sh_st': List(Value('null')), 'l_st_sb': List(Value('null')), 'r_st_sb': List(Value('null')), 'l_sb_ar': List(Value('null')), 'r_sb_ar': List(Value('null')), 'l_sh_ar': List(Value('null')), 'r_sh_ar': List(Value('null')), 'l_ar_cn': List(Value('null')), 'r_ar_cn': List(Value('null')), 'l_cn_bt': List(Value('null')), 'r_cn_bt': List(Value('null')), 'hood_top': List(Value('float64')), 'l_co_ht': List(List(Value('float64'))), 'r_co_ht': List(List(Value('float64')))}

Traceback: Traceback (most recent call last):

File "/usr/local/lib/python3.12/site-packages/datasets/builder.py", line 1887, in _prepare_split_single

writer.write_table(table)

File "/usr/local/lib/python3.12/site-packages/datasets/arrow_writer.py", line 675, in write_table

pa_table = table_cast(pa_table, self._schema)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/datasets/table.py", line 2272, in table_cast

return cast_table_to_schema(table, schema)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/datasets/table.py", line 2224, in cast_table_to_schema

cast_array_to_feature(

File "/usr/local/lib/python3.12/site-packages/datasets/table.py", line 1795, in wrapper

return pa.chunked_array([func(chunk, *args, **kwargs) for chunk in array.chunks])

^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/datasets/table.py", line 2092, in cast_array_to_feature

raise TypeError(f"Couldn't cast array of type\n{_short_str(array.type)}\nto\n{_short_str(feature)}")

TypeError: Couldn't cast array of type

struct<edge_max_z: double, edge_width: double, boundary_dx: double, boundary_dense_n: int64, interior_num: struct<front: int64, back: int64>, hood_opt_cfg: struct<final_z: double, y_offset: double, z_count: int64, rc: double, kc: double, cutoff_r: double, damping: double, xyzc: list<item: double>>, l_corner: list<item: double>, r_corner: list<item: double>, l_leg_o: list<item: double>, r_leg_o: list<item: double>, l_leg_i: list<item: double>, r_leg_i: list<item: double>, crotch: list<item: double>, top_ctr_f: list<item: double>, top_ctr_b: list<item: double>, l_tc_co: list<item: null>, r_tc_co: list<item: null>, l_co_lo: list<item: list<item: double>>, r_co_lo: list<item: list<item: double>>, l_lo_li: list<item: null>, r_lo_li: list<item: null>, l_li_cr: list<item: list<item: double>>, r_li_cr: list<item: list<item: double>>>

to

{'edge_max_z': Value('float64'), 'edge_width': Value('float64'), 'boundary_dx': Value('float64'), 'boundary_dense_n': Value('int64'), 'interior_num': {'front': Value('int64'), 'back': Value('int64'), 'leftf': Value('int64'), 'leftb': Value('int64'), 'rightf': Value('int64'), 'rightb': Value('int64'), 'hood': Value('int64')}, 'hood_opt_cfg': {'final_z': Value('float64'), 'y_offset': Value('float64'), 'z_count': Value('int64'), 'rc': Value('float64'), 'kc': Value('float64'), 'cutoff_r': Value('float64'), 'damping': Value('float64'), 'xyzc': List(Value('float64'))}, 'l_collar': List(Value('float64')), 'r_collar': List(Value('float64')), 'neck_b': List(Value('float64')), 'neck_f': List(Value('float64')), 'l_shoulder': List(Value('float64')), 'r_shoulder': List(Value('float64')), 'l_armpit': List(Value('float64')), 'r_armpit': List(Value('float64')), 'l_corner': List(Value('float64')), 'r_corner': List(Value('float64')), 'spine_bottom_f': List(Value('float64')), 'spine_bottom_b': List(Value('float64')), 'l_sleeve_top': List(Value('float64')), 'r_sleeve_top': List(Value('float64')), 'l_sleeve_bottom': List(Value('float64')), 'r_sleeve_bottom': List(Value('float64')), 'l_nf_cl': List(List(Value('float64'))), 'r_nf_cl': List(List(Value('float64'))), 'l_nb_cl': List(List(Value('float64'))), 'r_nb_cl': List(List(Value('float64'))), 'l_cl_sh': List(List(Value('float64'))), 'r_cl_sh': List(List(Value('float64'))), 'l_sh_st': List(Value('null')), 'r_sh_st': List(Value('null')), 'l_st_sb': List(Value('null')), 'r_st_sb': List(Value('null')), 'l_sb_ar': List(Value('null')), 'r_sb_ar': List(Value('null')), 'l_sh_ar': List(Value('null')), 'r_sh_ar': List(Value('null')), 'l_ar_cn': List(Value('null')), 'r_ar_cn': List(Value('null')), 'l_cn_bt': List(Value('null')), 'r_cn_bt': List(Value('null')), 'hood_top': List(Value('float64')), 'l_co_ht': List(List(Value('float64'))), 'r_co_ht': List(List(Value('float64')))}

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/src/services/worker/src/worker/job_runners/config/parquet_and_info.py", line 1342, in compute_config_parquet_and_info_response

parquet_operations, partial, estimated_dataset_info = stream_convert_to_parquet(

^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/src/services/worker/src/worker/job_runners/config/parquet_and_info.py", line 907, in stream_convert_to_parquet

builder._prepare_split(split_generator=splits_generators[split], file_format="parquet")

File "/usr/local/lib/python3.12/site-packages/datasets/builder.py", line 1736, in _prepare_split

for job_id, done, content in self._prepare_split_single(

^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "/usr/local/lib/python3.12/site-packages/datasets/builder.py", line 1919, in _prepare_split_single

raise DatasetGenerationError("An error occurred while generating the dataset") from e

datasets.exceptions.DatasetGenerationError: An error occurred while generating the datasetNeed help to make the dataset viewer work? Make sure to review how to configure the dataset viewer, and open a discussion for direct support.

cfg dict | triangulation dict | meta dict |

|---|---|---|

{

"edge_max_z": 0.0904095495910241,

"edge_width": 0.11357759060237921,

"boundary_dx": 0.015,

"boundary_dense_n": 10000,

"interior_num": {

"front": 2000,

"back": 2000,

"leftf": 150,

"leftb": 150,

"rightf": 150,

"rightb": 150,

"hood": 1000

},

"hood_opt_cfg": {

"final_z": -0.0... | {

"success": true,

"keypoint_idx": {

"l_shoulder": [

0,

2170,

4389,

4587

],

"l_collar": [

16,

2186,

5134

],

"neck_f": [

22

],

"r_collar": [

28,

2200,

5168

],

"r_shoulder": [

44,

2216,

4738,

... | {

"name": "hooded_close"

} |

{

"edge_max_z": 0.08185601617281477,

"edge_width": 0.11036305097883778,

"boundary_dx": 0.015,

"boundary_dense_n": 10000,

"interior_num": {

"front": 2000,

"back": 2000,

"leftf": 300,

"leftb": 300,

"rightf": 300,

"rightb": 300,

"hood": 1000

},

"hood_opt_cfg": {

"final_z": -0.... | {

"success": true,

"keypoint_idx": {

"l_shoulder": [

0,

2186,

4477,

4879

],

"l_collar": [

16,

2202,

5984

],

"neck_f": [

22

],

"r_collar": [

28,

2218,

6018

],

"r_shoulder": [

44,

2234,

5180,

... | {

"name": "hooded_close"

} |

{

"edge_max_z": 0.09167802731307352,

"edge_width": 0.10365262872604031,

"boundary_dx": 0.015,

"boundary_dense_n": 10000,

"interior_num": {

"front": 2000,

"back": 2000,

"leftf": 300,

"leftb": 300,

"rightf": 300,

"rightb": 300,

"hood": 1000

},

"hood_opt_cfg": {

"final_z": -0.... | {

"success": true,

"keypoint_idx": {

"l_shoulder": [

0,

2166,

4416,

4797

],

"l_collar": [

17,

2183,

5860

],

"neck_f": [

22

],

"r_collar": [

27,

2197,

5896

],

"r_shoulder": [

44,

2214,

5098,

... | {

"name": "hooded_close"

} |

{"edge_max_z":0.09840047558190107,"edge_width":0.10597752707830227,"boundary_dx":0.015,"boundary_den(...TRUNCATED) | {"success":true,"keypoint_idx":{"l_shoulder":[0,2182,4415,4615],"l_collar":[16,2198,5166],"neck_f":[(...TRUNCATED) | {

"name": "hooded_close"

} |

{"edge_max_z":0.08913004921941,"edge_width":0.11653356286689154,"boundary_dx":0.015,"boundary_dense_(...TRUNCATED) | {"success":true,"keypoint_idx":{"l_shoulder":[0,2174,4438,4827],"l_collar":[17,2191,5906],"neck_f":[(...TRUNCATED) | {

"name": "hooded_close"

} |

{"edge_max_z":0.08449861373765503,"edge_width":0.11623973671333054,"boundary_dx":0.015,"boundary_den(...TRUNCATED) | {"success":true,"keypoint_idx":{"l_shoulder":[0,2164,4381,4583],"l_collar":[15,2179,5138],"neck_f":[(...TRUNCATED) | {

"name": "hooded_close"

} |

{"edge_max_z":0.09916325712057376,"edge_width":0.10734034668465386,"boundary_dx":0.015,"boundary_den(...TRUNCATED) | {"success":true,"keypoint_idx":{"l_shoulder":[0,2166,4419,4803],"l_collar":[17,2183,5872],"neck_f":[(...TRUNCATED) | {

"name": "hooded_close"

} |

{"edge_max_z":0.08272635052470956,"edge_width":0.10830727096959711,"boundary_dx":0.015,"boundary_den(...TRUNCATED) | {"success":true,"keypoint_idx":{"l_shoulder":[0,2156,4394,4775],"l_collar":[16,2172,5838],"neck_f":[(...TRUNCATED) | {

"name": "hooded_close"

} |

{"edge_max_z":0.09507090406275831,"edge_width":0.11193831687060786,"boundary_dx":0.015,"boundary_den(...TRUNCATED) | {"success":true,"keypoint_idx":{"l_shoulder":[0,2156,4360,4555],"l_collar":[18,2174,5096],"neck_f":[(...TRUNCATED) | {

"name": "hooded_close"

} |

{"edge_max_z":0.0898771887367977,"edge_width":0.11805964788668073,"boundary_dx":0.015,"boundary_dens(...TRUNCATED) | {"success":true,"keypoint_idx":{"l_shoulder":[0,2166,4375,4569],"l_collar":[16,2182,5108],"neck_f":[(...TRUNCATED) | {

"name": "hooded_close"

} |

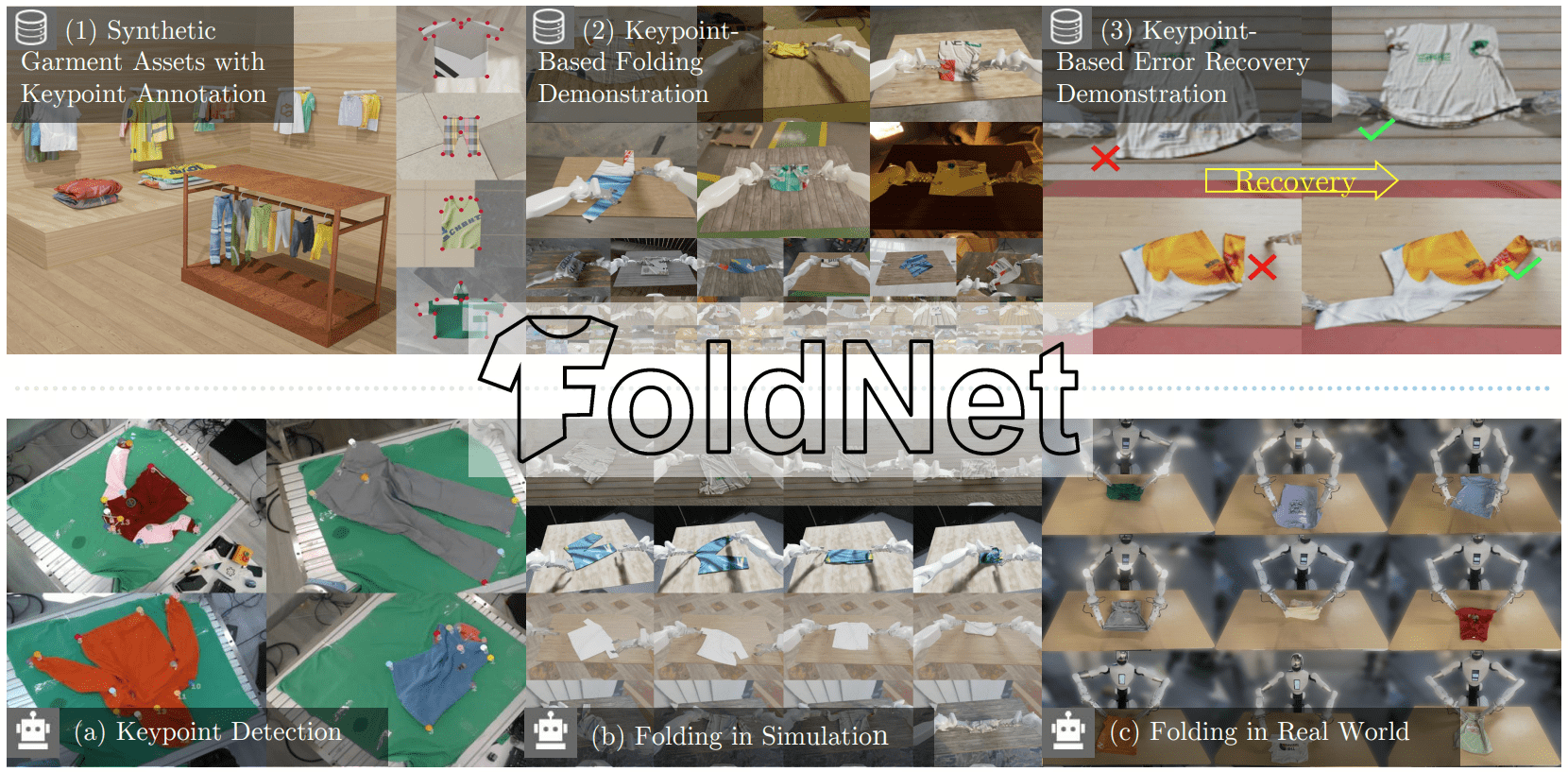

FoldNet Dataset

FoldNet is a high-fidelity synthetic dataset featuring over 4,000 unique meshes across four distinct garment categories. Designed to support a wide range of downstream applications—including robotic folding and cloth manipulation—FoldNet provides physically plausible geometries paired with photorealistic textures.

🔑 Key Features

- Diverse Cloth Categories: including tshirt, trousers, vest and hoodie.

- High Quality Mesh:

- Watertight and manifold meshes.

- No self-intersections.

- Configurable resolution with adjustable vertex density and face sizing.

- Diverse and Realistic Textures: High-quality textures procedurally generated via Stable-Diffusion-3.5

- Rich Annotation:

- Automatically labeled manipulable keypoints for robotic interaction.

- Pre-computed UV mapping for seamless texturing.

- Highly Scalable: A robust procedural framework capable of generating an infinite variety of plausible garment shapes.

🔥 Get started

To download the full dataset, you can use the following code. If you encounter any issues, please refer to the official Hugging Face documentation.

# Make sure you have git-lfs installed (https://git-lfs.com)

git lfs install

# When prompted for a password, use an access token with write permissions.

# Generate one from your settings: https://huggingface.co/settings/tokens

git clone https://huggingface.co/datasets/Bowie375/FoldNet

# If you want to clone without large files - just their pointers

GIT_LFS_SKIP_SMUDGE=1 git clone https://huggingface.co/datasets/Bowie375/FoldNet

🗂️ Dataset Structure

Under mesh directory, we provide raw cloth meshes with default texture:

mesh

├── tshirt_sp # category

│ ├── 0

│ │ ├── mesh.obj # generated mesh

│ │ ├── mesh.key.obj # the same mesh with keypoints marked red

│ │ ├── mesh_info.json # the configurations of the mesh, like edge length and keypoint index

│ │ ├── material.mtl

│ │ ├── material.png # default texture

│ ├── 1

│ │ └── ...

│ ├── ...

├── trousers

│ ├── ...

├── vest_close

│ ├── ...

├── hooded_close

│ ├── ...

🛠️ Dataset Creation

FoldNet features a fully automated end-to-end data generation pipeline. Our framework procedurally synthesizes garment geometries, applies AI-driven texturing, and generates ground-truth annotations without human intervention.

For technical implementation details, source code, and step-by-step instructions to reproduce the dataset, please visit the FoldNet GitHub Repository.

📅 TODO List

- [2026.2] Released: 4k synthetic 3D garment assets (across 4 cloth categories). The directory is mesh.

- To be released: textured cloth data.

Citation

@article{11359673,

author={Chen, Yuxing and Xiao, Bowen and Wang, He},

journal={IEEE Robotics and Automation Letters},

title={FoldNet: Learning Generalizable Closed-Loop Policy for Garment Folding via Keypoint-Driven Asset and Demonstration Synthesis},

year={2026},

volume={},

number={},

pages={1-8},

keywords={Clothing;Geometry;Imitation learning;Annotations;Trajectory;Training;Synthetic data;Pipelines;Grasping;Filtering;Bimanual manipulation;deep learning for visual perception;deep learning in grasping and manipulation},

doi={10.1109/LRA.2026.3656770}}

- Downloads last month

- 275